The Macrophonics artist collective has just commenced our Open Source media technologies residency with Sydney based physical theatre company Legs on the Wall at their Red Box development space. Background information on the project can be found here.

The residency sees the group examine a range of interface technologies and approaches to explore the nexus between theatrical performers and a four member live media ensemble. The objective is to build a responsive performance environment that will allow the theatre performers to have direct gestural input into the media control system, defining new relationships between the performance ensemble, the media design elements and the media ensemble.

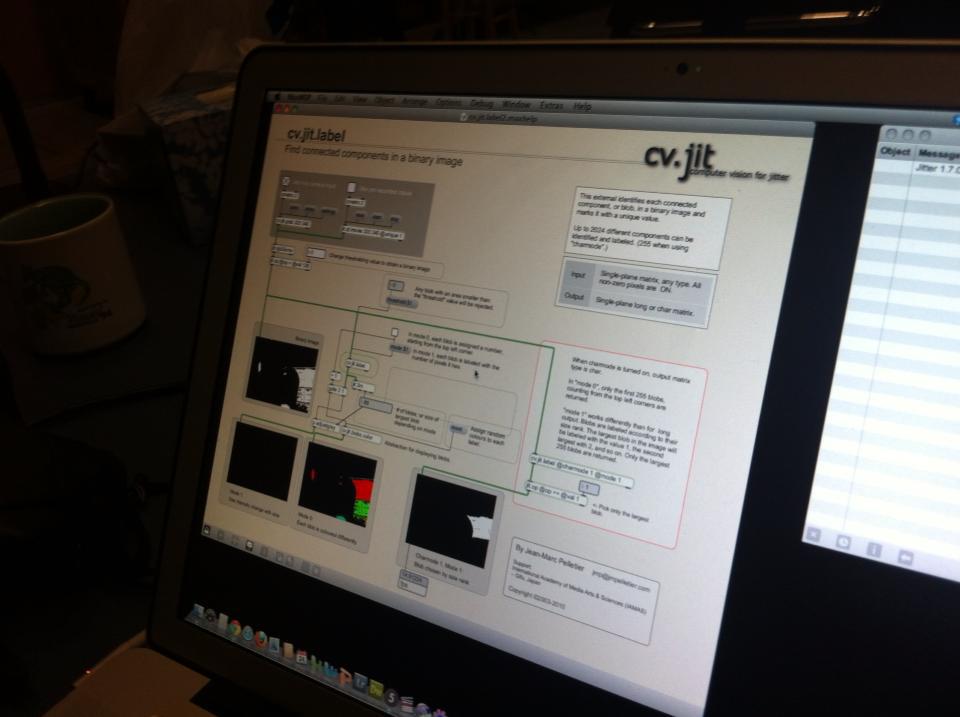

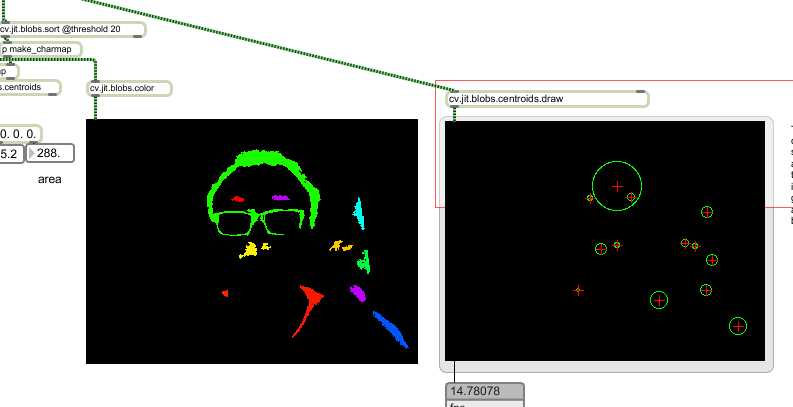

We’ve broadly structured our investigation across two approaches. The first is video tracking/computer vision techniques (using the cv.jit suite of objects for Jitter) where the stage area can be analysed and moving objects tracked.

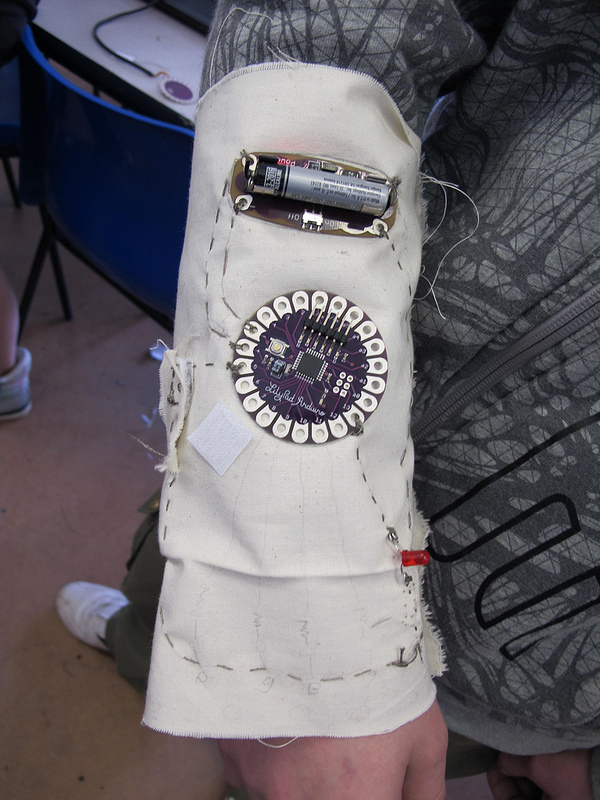

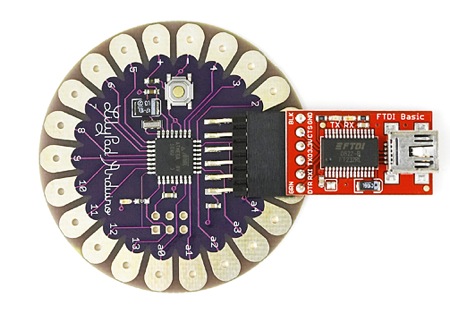

The second area of investigation is wearable garment interfaces that sense movement directly at each performer (accelerometers, pressure and flex sensors, light sensors etc) and send data wirelessly to the media ensemble. We are using the LilyPad Arduino platform for this work. The LilyPad is a small, flat implementation of the Arduino platform that can be sewn into garments. Conductive ‘thread’ can then be sewn in ‘tracks’ into the garment to form the links between the device and the attached sensors.

The LilyPad can be run from a battery source and can, with a bit of extra stuff, transmit data wirelessly over an ad hoc wireless network.