While Donna works on building the wearable top with sensors, Julian is working on making ‘musical scenes’. These scenes are modular, with a range of musical elements which can be triggered or manipulated in an improvisational manner. They are designed for non-musician physical theatre performers/acrobats. The wearable top will have a Triaxial Accelerometer (reading X, Y, Z axes) mounted on the right arm, a flex sensor on the left elbow and some buttons. Julian has been using a Wii remote to simulate the accelerometer and buttons so he can work on parameter mapping and prototype some a/v scenes while Donna is working on building the wearable interface. The Wii remote can act as a hand held device which contains much of the functionality of the wearable (accelerometer and buttons)

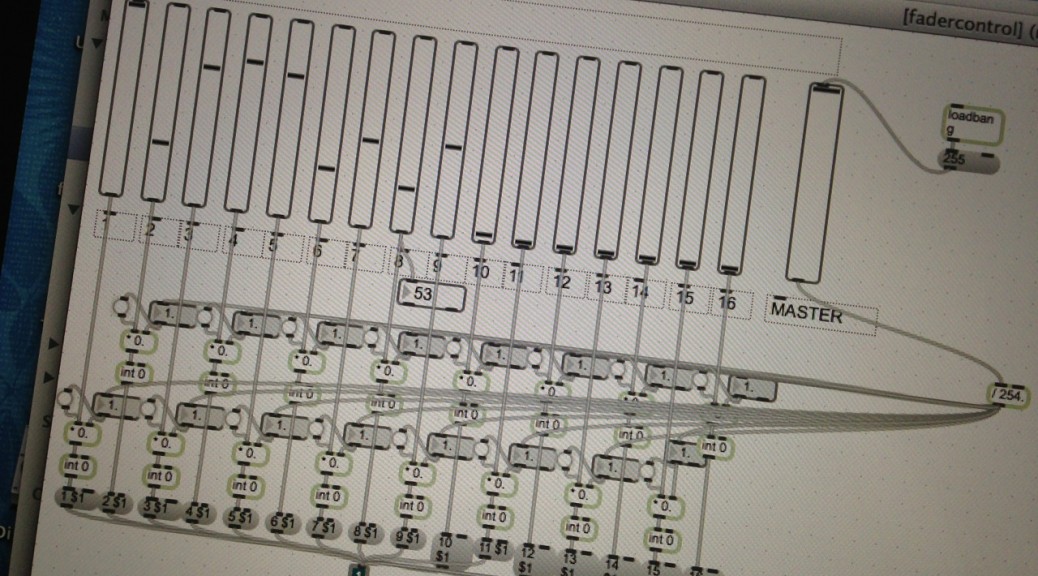

Julian is using the fantastic software Osculator to take the bluetooth Wiimote data and convert it to midi. He is then streaming the midi into a patch in MaxMSP which allows him to condition, scale and route the data streams before they get sent to Ableton Live to control audio.

The system is very robust and today Julian had it working to a distance of over 15 metres. Let’s hope the wifi from the Lilypad is as solid. Here is an example of the wii remote being used to control audio. The accelerometer XYZ outputs control various audio filter processing parameters and volume changes, while the buttons are used to trigger audio events.

Macrophonics. Open Source. Wiimote test from Julian Knowles on Vimeo.

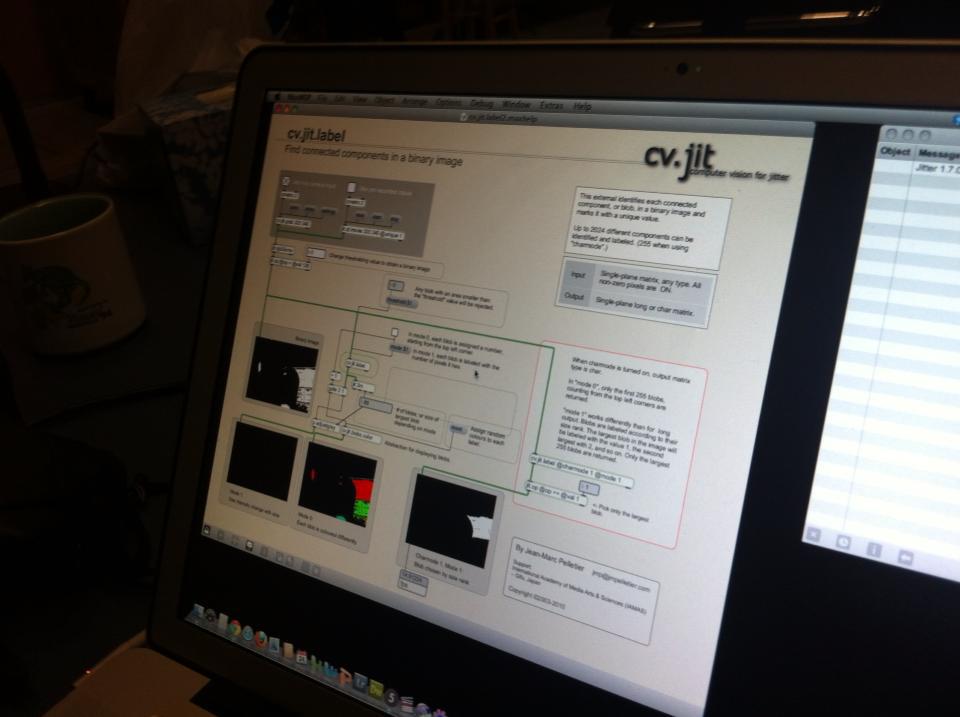

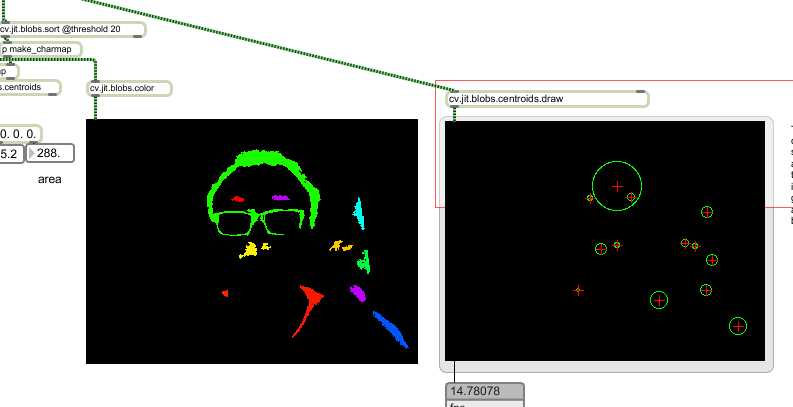

On the video side, Julian has been building a realtime video processing system in the Jitter environment.

The following example shows the wii remote accelerometer XYZ parameters mapped to video processing. No audio processing is taking place in this example. Julian is just listening to some music while he programs and tests the video processing system. In this example the wii remote is driving a patch Julian has written in Jitter. A quicktime movie is used as input and the wiimote is driving real time processing. Julian has also extended the jitter patch to allow it to process a live camera input.

Macrophonics. Open source. Prototyping video processing with wii remote

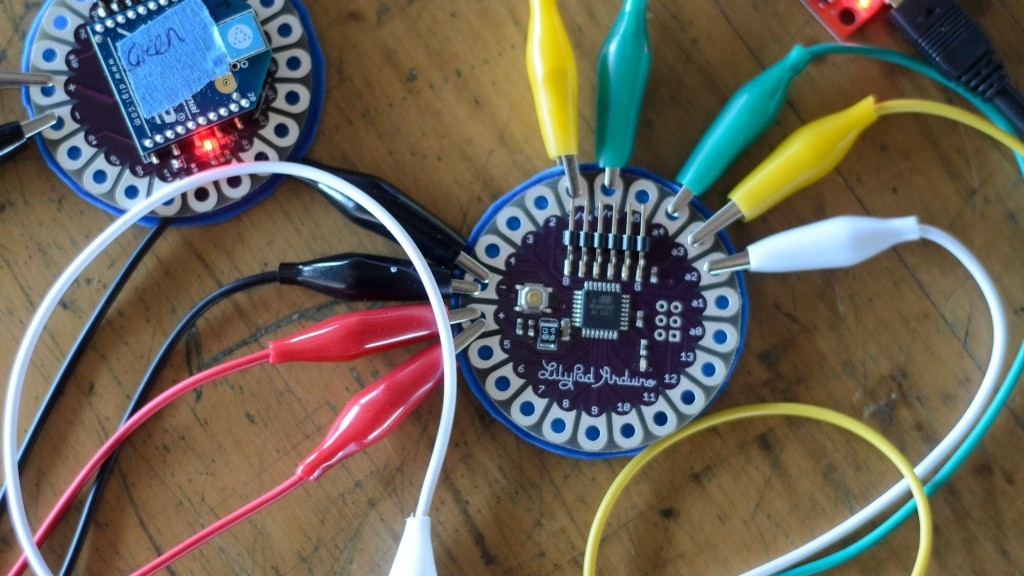

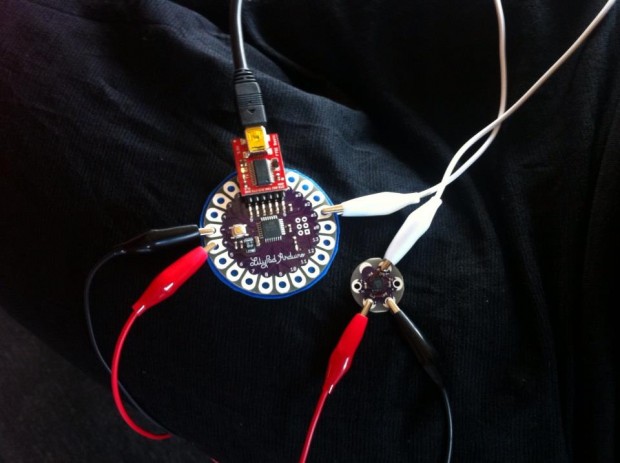

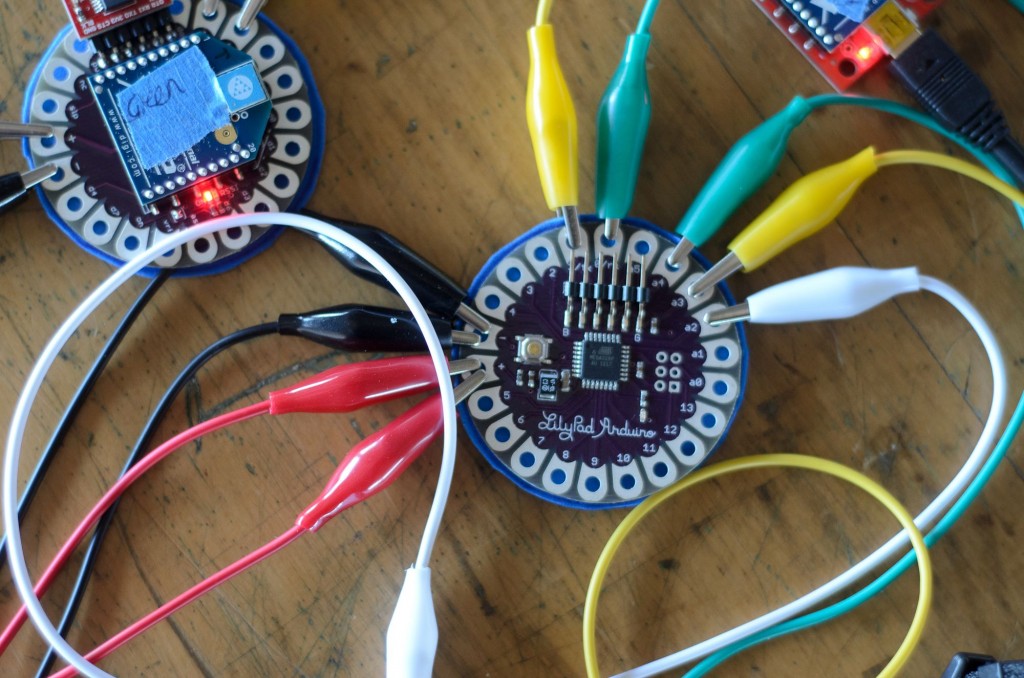

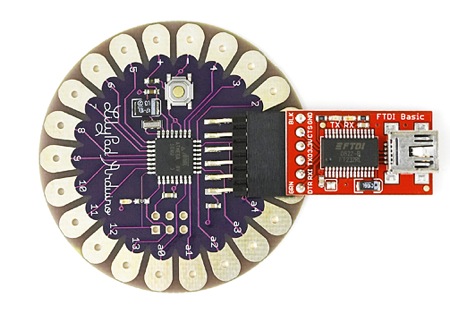

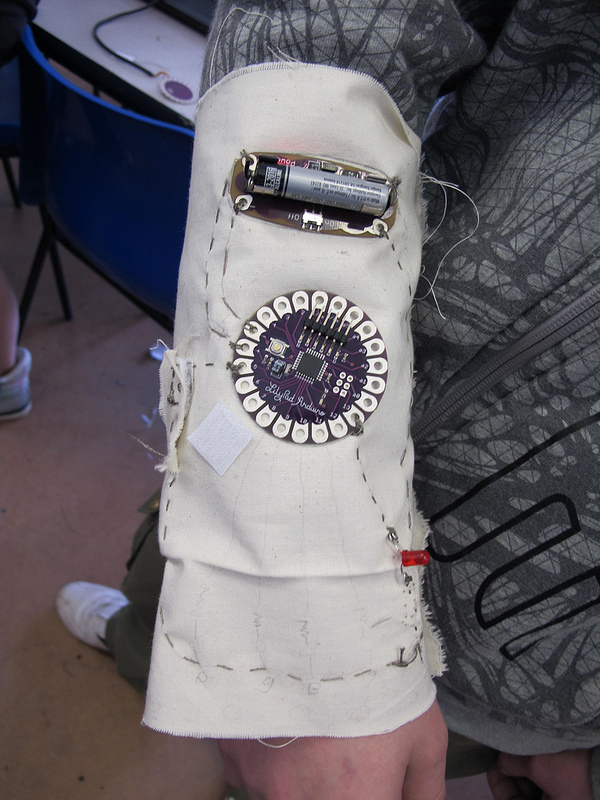

Donna has been working away on the wearable interface that will contain the functionality of the above (plus more). She has been designing the layout of the sensors and working out how to connect everything within the given constraints of the LilyPad system. The conductive thread that can be used to sew the sensors in and connect to the main board has quite a high resistance, and so runs need to be kept short. Likewise the run between the battery board and the Lilypad/Xbee board needs to be short, so as to keep maximum current available. Runs of conductive thread cannot be crossed over or they will short out.

We’re hoping to get the wearable interface completed in the next day or two so we can start to test it out with the modular musical materials. For the purposes of the showing, we’ll demonstrate three a/v ‘scenes’ in sequence, demonstrating different approaches and relationships between gesture and media.

The first scene will be drone/video synthesis based (with the performers stage positions driving processing). This state will have a very strong correlation between the audio and video processing gestures and will allow for multiple performers moving in relation to ultrasonic range finder sensors.

The second scene will involve the wearable interface, with direct/detailed gestural control of audio and video elements from a solo performer.

The third involve a complex interplay of sensors and parameters. The wearable interface will perform time domain manipulation and transport control on quicktime materials whilst driving filters and processors in the audio domain. The scene will also make use of physical objects sounding on stage, driven by the wearable interface. The interface data will be used to control signals flowing through physical objects (in this case cymbals and a snare drum) and audio/spatial relationships will unfold between the performer’s gestures, proximity to objects and sound behaviours. Tim is taking care of the actuator setup.

Ultimately we are aiming for a fully responsive media environment. The aim is for the stage performer(s) to drive both audio and video elements and feel immersed in a highly responsive mediascape.